What is standard UX benchmarking?

The best place to start when thinking about benchmark testing for UX work is to get a basic definition. The Nielsen Norman Group says, “UX benchmarking refers to evaluating a product or service’s user experience by using metrics to gauge its relative performance against a meaningful standard.” Those standards can be industry standards, competitors, or most often in benchmarking previous versions of your own product or service.

To gather the data for these benchmarks, you need to come up with a list of tasks and metrics to be measured for each task. Looking at typical e-commerce as an example, the first task might be to find a product category on the website. The metrics for a task like that can include things like a pass/fail rate, task timings, ease of ratings, etc. Once you have those metrics you compare them against whatever “meaningful standard” you decided on, most often your own previous benchmarks. Comparing those metrics over time helps you understand whether the changes being made to your product/service are effective or not; task timings going down with pass rates going up means you’re doing a good job, while an increasing rate of failure means something has gone wrong.

Being able to compare those metrics over time is incredibly helpful at identifying areas for growth or improvement, as well as to see where you have been improving, but these benchmarks are very focused on the quantitative side of research and don’t always effectively help answer why these changes in metrics are being seen. It’s helpful to know that something on your site is working worse than before, but it’s hard to act efficiently to correct that without understanding why it’s not working as well. That’s where our qualitative benchmarking methodology comes into the picture!

What is qualitative UX benchmarking?

So what is qualitative benchmarking? I like to think of the qualitative benchmarking methodology as the “goldilocks” of benchmarking; a perfect balance of both the comparable quantitative data but also the qualitative data to help understand why something changed (and what to do about it). Where a standard quantitative benchmark can be automated and run without a moderator (remember, it’s the same tasks and same questions being asked each time), a qualitative benchmark introduces aspects of more standard usability testing conducted by a moderator.

In a standard, quantitative focused benchmark, your participants would typically go straight from one task into the next immediately after answering any rating questions. In a qualitative benchmark, we add in some more discussion about each task after they’ve answered their rating questions for the previous task. Instead of going right into the next task, the moderator will walk back through the prior task with the participant and ask questions about the experience and what could be done to improve the experience, similar to how we conduct our standard usability tests. That allows us to not only look to the data from the benchmark that tells us whether something has been improved or not, but also to have some insights from the target audience as to why it’s improved or not.

Going back to our e-commerce example of looking for a product category, our data might tell us that after the latest site update (that might have included a complete taxonomy overall and redesign of site navigation, for example) it took almost twice as long for participants to find the category and their ease of use ratings went down notably. The discussion with our participants adds in insights to help address that issue; maybe it’s because the taxonomy changed and participants didn’t understand the new categories, maybe it’s because you changed your site structure and put all of the categories in a hamburger menu that participants struggled to locate, or maybe it’s a simple cosmetic issue where they simply couldn’t read the category titles.

Qualitative benchmarking is the best of both worlds; quantitative insights you can rely on, plus the qualitative insights to make more informed decisions about what to do next.

When should you benchmark test?

Determining the right time and frequency to benchmark test is something that isn’t always clear cut to our clients. It’s not uncommon for companies to come into benchmark testing with a set schedule in mind, wanting to test every quarter for example, without thinking through whether that’s the most beneficial schedule for benchmark testing.

The best way to determine how often to benchmark test is to consider your development cycle and how often or how quickly you’re able to make changes. There’s very limited benefit from spending time, effort, and money on another benchmark study if you haven’t actually made any changes since the previous benchmark. You’d almost always be better off doing smaller scale, more targeted research into a specific area of your product until you’ve made notable changes to test with a benchmark study.

One of the other considerations to take into account when thinking about how often to conduct benchmark testing is whether you’re evaluating competitors along with your own product. If you’re wanting to start comparing to the competition, or a competitor you’ve benchmarked against in the past has made big changes, that’s also a great time to run another benchmark study even if you haven’t necessarily made big changes to your own product.

How to get ready for qualitative benchmarks?

As most UX professionals will already know, there’s legwork that needs to be done before any UX research, and absolutely includes benchmark testing. You have to identify your target users, determine what tasks you want them trying, decide on any metrics you want to gather, and make sure that you have a way to actually conduct the test (whether that’s a live site, a prototype, or something else entirely). This up-front work is important on any study, but is even more critical on benchmark studies for one main reason; that repeatability and consistency are absolutely critical for effective benchmark studies.

Simply put, if your goal is to compare changes to your product over time, you can’t change what you’re testing or how you’re testing it between benchmark studies otherwise you can’t compare the data accurately. If you change a task in any notable way in later rounds of research, you can’t reliably compare it to the previously collected data. If you add a task, or add rating questions, in later rounds of research you simply don’t have previous data to compare to, taking away one of the key reasons for conducting benchmark testing. A knowledgeable researcher can help you design a “future-proof” research plan, that takes these potential limitations into consideration from t he start.

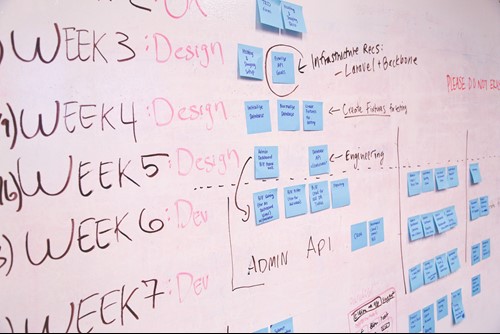

It’s essential to work together as a team to get ready for benchmark testing. Researchers, product managers/owners, developers, and anyone else involved in the research all have to be on the same page to get the most out of this sort of research. You’ll need to work together to determine the task list, and along with it strict definitions for what counts as a pass or a fail for each task. You’ll need to determine any and all rating questions you want to ask, and how you want to ask them. You’ll need to make sure that the test environment is ready for testing, whether that’s turning off A/B testing on a live site, making sure a test environment is ready, creating a prototype version, or something else entirely.

Yes, it’s a lot of up-front work to get ready to start benchmark testing, but the good news here is that most of the work only has to be done once! Once you’ve determined all of these things, you just have to do it again for future benchmark studies. For those subsequent benchmark studies there shouldn’t be legwork to create tasks or come up with rating questions because the whole point of the research is to be consistent and repeatable between rounds of research! Sure, there’s still legwork to find new participants for each round of research and to make sure the testing environment is ready to go, but each benchmark should be easier to run than the previous one.

There is significant value to be found in standard, quantitative focused benchmark testing just like there’s significant value to be found in qualitative usability testing. If you’re struggling to decide between those two approaches, you might find that these qualitative benchmark tests offer a “best of both worlds” compromise; you’re able to see changes over time, both positive and negative, and understand those changes from your user’s point of view. Gathering the qualitative insights along with our quantitative data simply tells a more complete story than doing either without the other.